-

Continue reading →: Welcome to VMware Lab

Continue reading →: Welcome to VMware LabTransforming IT with VMware Cloud Hello, dear readers! I hope this message finds you all in great spirits. I’ve been on a considerable hiatus since the last two years, when I changed roles from a Cloud Management Presales Solution Architect focused on everything Aria Suite to a Senior Technical Marketing…

-

Continue reading →: Tanzu Kubernetes Grid (TKG) Clusters As-A-Service with vRealize Automation OOTB integration with vSphere with Tanzu.

Continue reading →: Tanzu Kubernetes Grid (TKG) Clusters As-A-Service with vRealize Automation OOTB integration with vSphere with Tanzu.In this blog I m going to cover how vRealize Automation / vRealize Automation Cloud integrate out of the box with vSphere with Tanzu that will help empower DevOps teams to easily request, provision and operate a Tanzu Kubernets Grid (TKG) as a service. Overview vRealize Automation is a Multi-Cloud…

-

Continue reading →: vRealize Operations 8.x (vROPs) Memory Reporting and Failover when Guest Memory Metrics are not Available.

Continue reading →: vRealize Operations 8.x (vROPs) Memory Reporting and Failover when Guest Memory Metrics are not Available.In this blog I am going to simply focus on the behavior of two of the most commonly used memory metrics in my opinion in vRealize Operations for vSphere based objects : Memory | Usage (%) Memory | Workload (%) Memory | Utilization (KB) All three metrics should be nearly…

-

Continue reading →: VMware vRealize Automation ITSM Application 8.2 for ServiceNow

Continue reading →: VMware vRealize Automation ITSM Application 8.2 for ServiceNowVMware vRealize Automation ITSM Application 8.2 is available now in the ServiceNow Store here VMware vRealize Automation speeds up the delivery of infrastructure and application resources through a policy-based self-service portal, running on-premises or as a service that help organizations increase business, IT agility, productivity, and efficiency. The solution…

-

Continue reading →: Infoblox IPAM Plug-in 1.1 Integration with vRealize Automation 8.1 / vRealize Automation Cloud

Continue reading →: Infoblox IPAM Plug-in 1.1 Integration with vRealize Automation 8.1 / vRealize Automation CloudHello Everyone Welcome to VMwareLab “Your VMware Cloud Management Blogger” With vRealize Automation you can use an external IPAM provider to manage IP address assignments for your blueprint deployments. In this integration use case, you use an existing IPAM provider package, in this case its an Infoblox package, and an…

-

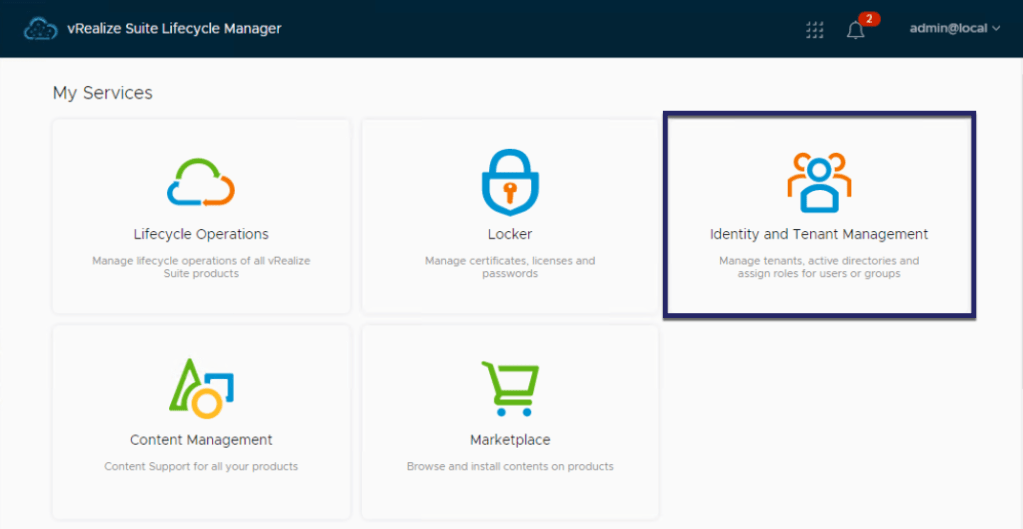

Continue reading →: vRealize Automation 8.1 Multi-Tenancy Setup with vRealize Suite Lifecycle Manager 8.1

Continue reading →: vRealize Automation 8.1 Multi-Tenancy Setup with vRealize Suite Lifecycle Manager 8.1Today VMware is releasing VMware vRealize Automation 8.1 , the latest release of VMware’s industry-leading, modern infrastructure automation platform. This release delivers new and enhanced capabilities to enable IT/Cloud admins, DevOps admins, and SREs to further accelerate their on-going datacenter infrastructure modernization and cloud migration initiatives, focused on the following key use…

-

Continue reading →: How to Deploy vRA 8.0.1 while dealing with the Built-in containers root password expiration, preventing installations for vRealize Automation 8.0 and 8.0.1

Continue reading →: How to Deploy vRA 8.0.1 while dealing with the Built-in containers root password expiration, preventing installations for vRealize Automation 8.0 and 8.0.1Let’s get into it right away. A few weeks ago the 90 days account expiry from vRealize Automation 8.0 and 8.0.1 GA releases has been exceeded for both the Postgres and Orchestrator services which runs today as Kubernetes pods. This issue is resolved in vRealize Automation 8.1 which is soon to…

-

Continue reading →: vSphere Customization with Cloud-init While Using vRealize Automation 8 or Cloud.

Continue reading →: vSphere Customization with Cloud-init While Using vRealize Automation 8 or Cloud.After spending an enormous amount of time, which I think started somewhere in the summer of last year to get vSphere Customization to work with Cloud-init while using vRealize Automation 8 or vRealize Automation Cloud as the automation platform to provision virtual machine deployments and install, configure the applications running on…

-

Continue reading →: Part 3: vRealize Automation 8.0 Deployment with vRealize Suite Lifecycle Manager 8.0

Continue reading →: Part 3: vRealize Automation 8.0 Deployment with vRealize Suite Lifecycle Manager 8.0In Part 2 of my vRealize Automation 8.0 blog video series, we have upgraded vRealize Lifecycle Manager 2.1 to 8.0 by performing a side by side migration leveraging the vRealize Easy Installer while importing the management of both VMware Identity manager 3.3.0 and the vRealize Suite 2018 environment. In this…

-

Continue reading →: Part 2: Migration of vRSLCM 2.x Version to vRealize Suite Lifecycle Manager 8.0

Continue reading →: Part 2: Migration of vRSLCM 2.x Version to vRealize Suite Lifecycle Manager 8.0If you happen to have an existing vRSLCM 2.x and vIDM 3.3.0 in your environment then you will need the vRealize Easy Installer to migrate your existing vRSLCM 2.x instance to vRSLCM 8.0. Once your migration to vRSLCM 8.0 is completed you can upgrade your vIDM instance to 3.3.1 since…

-

Continue reading →: Part 1: vRealize Automation 8.0 Simple Deployment with vRealize Easy Installer

Continue reading →: Part 1: vRealize Automation 8.0 Simple Deployment with vRealize Easy InstallerOn October 17th, 2019 VMware announced the next major release of vRealize Automation. it uses a modern Kubernetes based micro-services architecture and brings vRA cloud capabilities to the on-premises form factor. What’s New The many benefits of vRA 8.0 include: Modern Platform using Kubernetes based micro-services architecture that provides Easy…

-

Continue reading →: vRealize Automation 7.6 (vRA 7.6) ITSM 7.6 Plug-in for ServiceNow

VMware vRealize Automation is a hybrid cloud automation platform that transforms IT service delivery. With vRealize Automation, customers can increase agility, productivity and efficiency through automation, by reducing the complexity of their IT environment, streamlining IT processes and delivering a DevOps-ready automation platform. If you want to know more about…

-

Continue reading →: VMware Cloud Automation Services (CAS) – Cloud Assembly – Part 1

VMware’s cloud automation services are a set of cloud services that leverage the award-winning vRealize Automation on-premises offering. These services make it easy and efficient for developers to build and deploy applications. The cloud automation services consist of VMware Cloud Assembly, VMware Service Broker, and VMware Code Stream. Together, these…

-

Continue reading →: vRealize Automation Extensibility Starts with SovLabs Plug-in – Part 1

Continue reading →: vRealize Automation Extensibility Starts with SovLabs Plug-in – Part 1When you start looking at vRealize Automation extensibility and how you can integrate it into your own datacenter ecosystem or how you can accommodate certain extensibility use cases like provisioning workloads with custom host names based on a business logic or as simple as running scripts or attaching tags post…

-

Continue reading →: Installing and Configuring the vRealize Automation 7.5 (vRA 7.5) ITSM 5.0 / 5.1 Plug-in for ServiceNow

Continue reading →: Installing and Configuring the vRealize Automation 7.5 (vRA 7.5) ITSM 5.0 / 5.1 Plug-in for ServiceNowA new VMware vRealize Automation plugin 5.0 was released on November 2nd on the VMware market Place Link for Servicenow that provides an out of the box integration between the Servicenow portal and vRealize Automation 7.5 catalog and governance model. It enables ServiceNow users to deploy virtual machines using vRA…

-

Continue reading →: vCenter Content Lifecycle Management with vRealize Suite Lifecycle Manager 2.0

Continue reading →: vCenter Content Lifecycle Management with vRealize Suite Lifecycle Manager 2.0Content lifecycle management in vRealize Suite Lifecycle Manager provides a way for release managers and content developers to manage software-defined data center (SDDC) content, including capturing, testing, and release to various environments, and source control capabilities through GitLab integration. Content Developers are not allowed to set Release policy on end-points…

-

Continue reading →: Deploying and Upgrading vRealize Automation with vRealize Suite LifeCycle Manager 2.0 – Part 2

Continue reading →: Deploying and Upgrading vRealize Automation with vRealize Suite LifeCycle Manager 2.0 – Part 2Now that we have seen and understand how to deploy vRealize Automation 7.4 using vRealize Suite LifeCycle Manager 2.0 in Part 1 of the blog, we are ready to continue using vRSLCM to upgrade the vRA 7.4 instance we deployed to vRA 7.5. So let’s get started Eh!. Right away…

-

Continue reading →: Deploying and Upgrading vRealize Automation with vRealize Suite LifeCycle Manager 2.0 – Part 1

Continue reading →: Deploying and Upgrading vRealize Automation with vRealize Suite LifeCycle Manager 2.0 – Part 1Wow the title is such a mouthful and so is this blog, so get your popcorn ready and get cosy friends cause we are going to try and capture everything we need to do, so we can use vRSLCM 2.0 to : Deploy vRA 7.4 and then Upgrade it to…

-

Continue reading →: vRealize Automation 7.3 Plug-In for ITSM – Service Now 3.0 – Step by Step Guide!

Continue reading →: vRealize Automation 7.3 Plug-In for ITSM – Service Now 3.0 – Step by Step Guide!Before I start I want to give credit to Spas Kaloferov original blog on this subject. I think you should take the time to check it out specially if your considering using ADFS, as his blog includes the ADFS configuration steps where in my setup I didn’t use ADFS! there…

-

Continue reading →: vRealize Automation 7.3 is Released! – What’s New – Part 3

Continue reading →: vRealize Automation 7.3 is Released! – What’s New – Part 3Continuing again on the same theme – Make the Private Cloud Easy – that we mentioned in the two previous blog post vRA 7.3 What’s New – Part 1 and What’s New – Part 2 we will continue to highlight more of the NSX integration Enhancements and for this part of the series we…

-

Continue reading →: vRealize Automation 7.3 is Released! – What’s New – Part 2

Continue reading →: vRealize Automation 7.3 is Released! – What’s New – Part 2Continuing on the same theme – Make the Private Cloud Easy – that we mentioned in the pervious blog post vRA 7.3 What’s New – Part 1 , we will highlight the NSX integration Enhancements for just the NSX Endpoint and On-Demand Load balancer that was added in this release. there are…

-

Continue reading →: vRealize Automation 7.3 is Released! – What’s New – Part 1

Continue reading →: vRealize Automation 7.3 is Released! – What’s New – Part 1Oh my god I can’t believe that this is only a dot-release as you read through the What’s New section in the vRA 7.3 Release Notes, looking at the massive amount of features we are releasing with this release, its just mind blowing. I can’t describe the amount of excitement that…

-

![Virtual Container Host As A Service [VCHAAS] With vRealize Automation – vRA 7.x](https://vmwarelab.org/wp-content/uploads/2017/04/vmware_article_013-e1493058025278.jpg?w=813) Continue reading →: Virtual Container Host As A Service [VCHAAS] With vRealize Automation – vRA 7.x

Continue reading →: Virtual Container Host As A Service [VCHAAS] With vRealize Automation – vRA 7.xvSphere Integrated Containers Engine is a container run-time for vSphere, allowing developers familiar with Docker to develop in containers and deploy them alongside traditional VM-based workloads on vSphere clusters, and allowing for these workloads to be managed through the vSphere UI in a way familiar to existing vSphere admins. vSphere…

-

Continue reading →: Finally I’m a Blogger!

Continue reading →: Finally I’m a Blogger!I can’t remember how many times that I thought about having my own blog where I can write and post about topics that I’m passionate about around my line of work in Cloud Management here at VMware, in the hope that someone out there may find it helpful. Well my friends,…

-

Continue reading →: Kubernetes APIs as the Universal Control Plane: How VMware Cloud Foundation 9 Leads the Way

Continue reading →: Kubernetes APIs as the Universal Control Plane: How VMware Cloud Foundation 9 Leads the WayThe Kubernetes declarative API model has already transformed the way organizations build and operate modern applications. But in recent years, a clear industry trend has emerged: the same Kubernetes model is being extended beyond containerized workloads into the world of infrastructure itself. What started with kubectl apply for Pods and…

-

Continue reading →: Monitoring vSphere IaaS Control Plane with VMware Aria Operations Management Pack for Kubernetes.

Continue reading →: Monitoring vSphere IaaS Control Plane with VMware Aria Operations Management Pack for Kubernetes.As a vSphere administrator, you can activate existing vSphere clusters for the vSphere IaaS Control Plane, formerly known as vSphere with Tanzu. This creates a Kubernetes control plane layer within the clusters’ ESXi hosts. vSphere clusters activated for the vSphere IaaS control plane are called Supervisors. Enabling the vSphere IaaS Control Plane transforms vSphere into…

-

Continue reading →: Provisioning Microsoft SQL Server 2022 with VMware Aria Automation

Continue reading →: Provisioning Microsoft SQL Server 2022 with VMware Aria AutomationIn this blog, we will discuss setting up a Windows Server 2022 virtual machine template, installing and configuring Cloudbase-init, configuring Aria Automation and downloading the MSSQL Server 2022 IaC YAML Aria Automation template from VMwarelab GitHub Repo. I wanted to provision a Microsoft SQL Server 2022 by requesting it from…

-

Continue reading →: VMware Aria Automation 8.x is On-premises Only.

Continue reading →: VMware Aria Automation 8.x is On-premises Only.VMware By Broadcom has announced the End of Availability (EoA) of the VMware Aria SaaS services, including VMware Aria Automation SaaS, as of February 2024. VMware will continue to support customers currently using VMware Aria SaaS services until the end of their subscription term. See VMware End Of Availability of Perpetual…

- September 2025 (1)

- November 2024 (1)

- June 2024 (1)

- May 2024 (2)

- April 2022 (1)

- February 2022 (1)

- December 2020 (1)

- May 2020 (1)

- April 2020 (1)

- March 2020 (1)

- February 2020 (1)

- October 2019 (3)

- September 2019 (1)

- January 2019 (1)

- December 2018 (1)

- November 2018 (1)

- October 2018 (3)

- October 2017 (1)

- May 2017 (3)

- April 2017 (2)